Overview

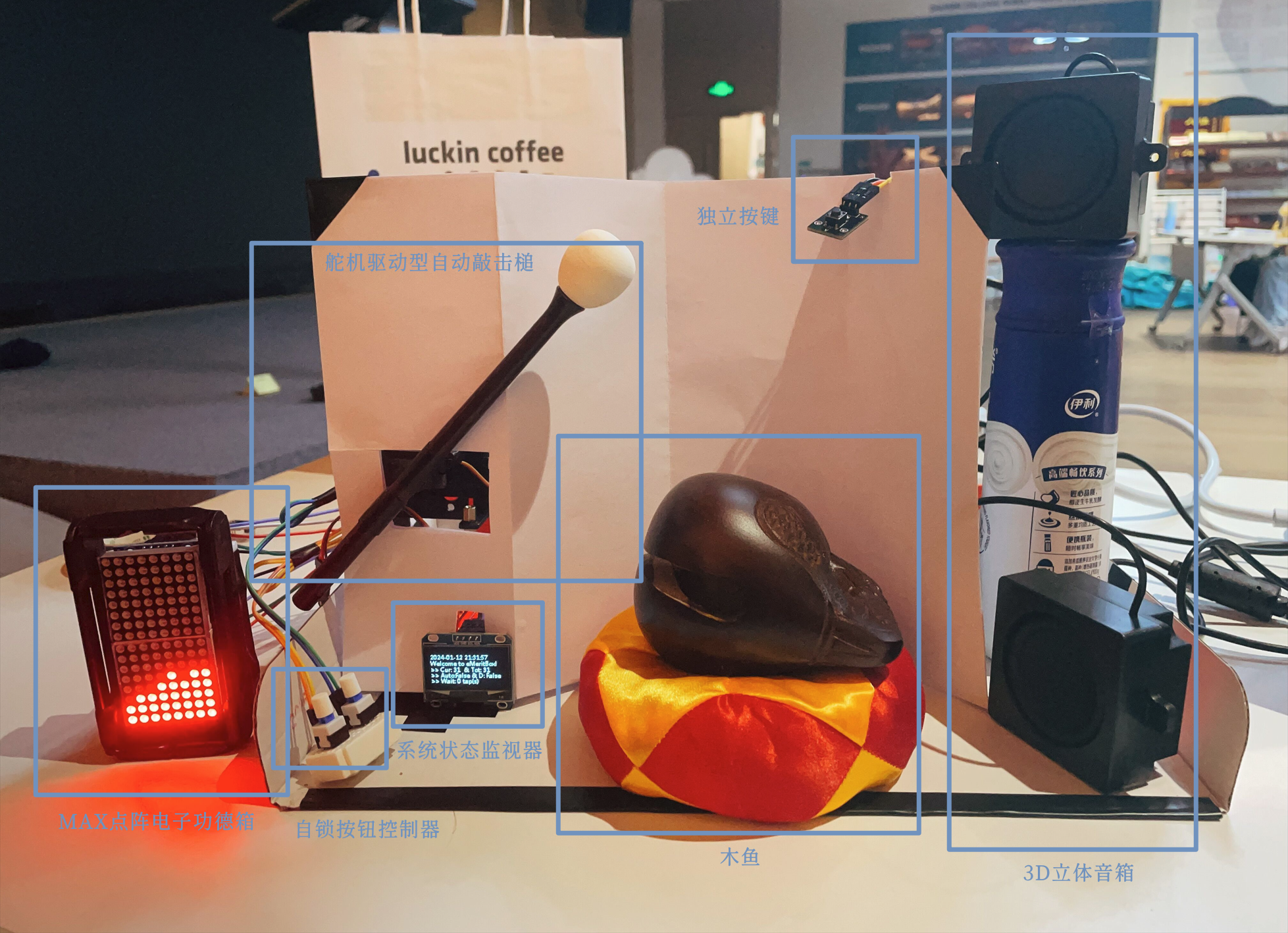

The eMeritBox project combines traditional Buddhist cultural elements with modern technology, creating an interactive gravity-sensing electronic donation box. Built with a Raspberry Pi, the system integrates PWM control, motion sensing, and a web-based interface to modernize the concept of a traditional donation box. This innovative design bridges traditional practices with digital solutions, offering a seamless and meaningful user experience.

Results

- System Features:

- Automatic wooden fish strikes with real-time donation ball accumulation.

- Gravity-sensing motion control for dynamic donation ball movement.

- Dual operational modes: manual and auto donation switching.

- Achievements:

- Successfully implemented a complete hardware-software system using Raspberry Pi and Flask.

- Developed reusable classes for matrix display and gravity sensing, enabling future adaptations.

GitHub (Chinese README) | Presentation PDF (Chinese version)

Technical Details

- System Architecture:

- Controller: Raspberry Pi handles signal processing, PWM control, and web server operations.

- Modules:

- MG-90 servo for wooden fish strikes.

- GY-25 gyroscope for motion sensing.

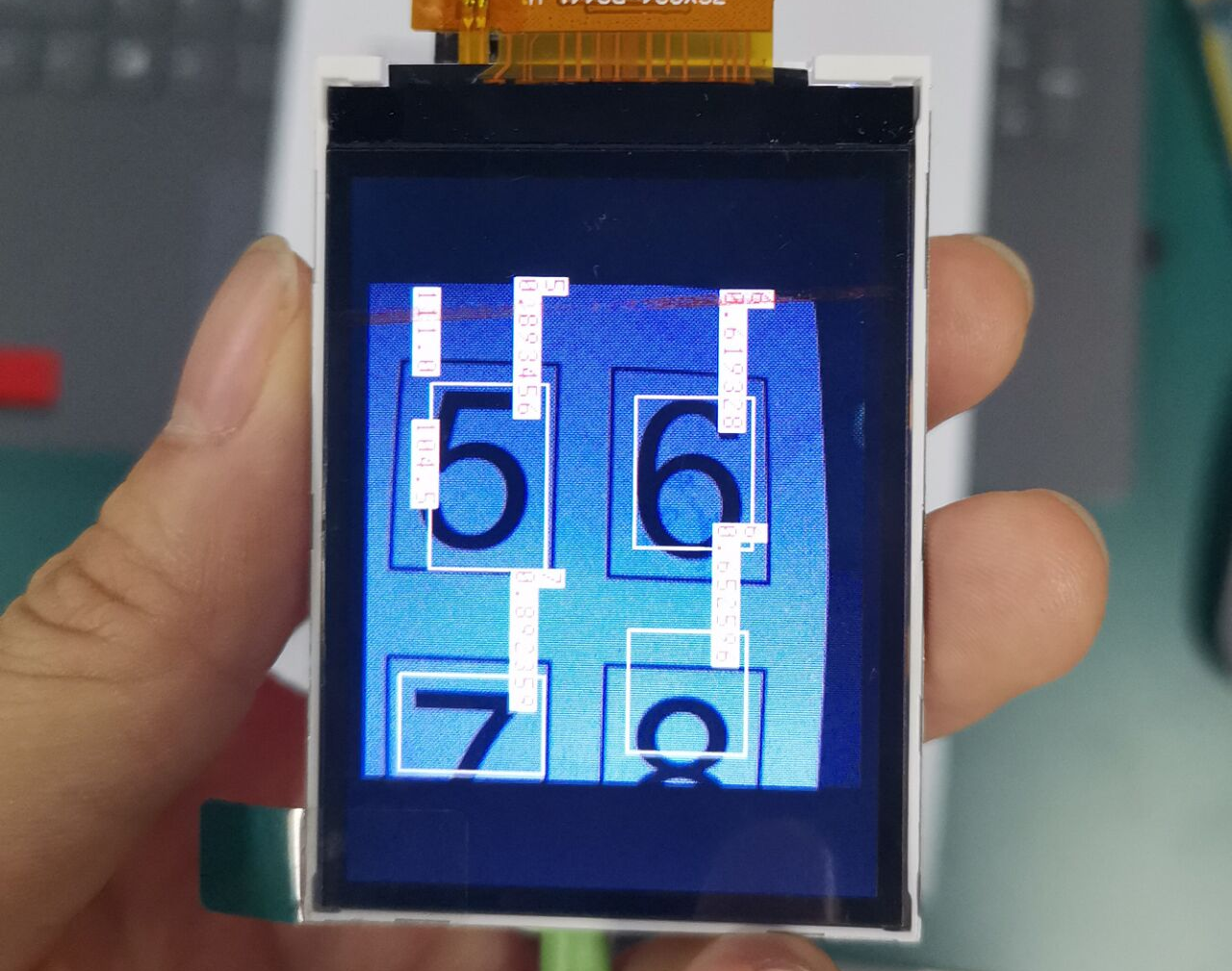

- MAX7219 matrix display for donation ball visualization.

- Key Functionalities:

- Gravity-Sensing Donation: Balls dynamically move based on the box’s tilt angle.

- Flask Web Server: Supports browser-based remote operation of wooden fish strikes.

- Matrix Display: Visualizes donation balls in real-time, reflecting their position and state.

- Software Implementation:

- Developed Python classes for modular control:

GY25Ctrlfor gyroscope data processing.MatrixCtrlfor donation ball display updates.BGMPlayerfor background music playback.

- Solved hardware conflicts by reconfiguring UART ports and enabling additional I²C channels.

- Developed Python classes for modular control:

Challenges

- UART hardware resource conflicts: Raspberry Pi’s default UART settings caused resource contention.

- Solution: Re-mapped hardware and mini UARTs (ttyAMA0 ↔ ttyS0) and configured multiple UART ports (+ttyAMA1, 2, …) for simultaneous operation.

- I²C channel conflicts: Dual I²C channels on Raspberry Pi conflicted with camera usage.

- Solution: Disabled the camera function and enabled additional I²C channels with

dtparam=i2c_vc=on.

- Solution: Disabled the camera function and enabled additional I²C channels with

- SPI and I²C competing with UART ports: Enabling SPI and I²C modules on the Raspberry Pi caused UART port contention.

- Solution: Adjusted hardware configurations to optimize resource allocation.

- Synchronization of Multiple Modules: Managing the simultaneous operation of PWM, matrix display, and motion sensing.

- Solution: Utilized multi-threading to ensure real-time responsiveness and system stability.

Reflection and Insights

The eMeritBox represents a modernized approach to traditional Buddhist donation practices, seamlessly integrating spiritual elements with advanced technology. By reimagining the donation process with dynamic visuals and interactive controls, this project demonstrates the potential of technology to preserve and innovate cultural traditions. The challenges in hardware-software integration further highlighted the importance of modular design and multi-threaded programming in building robust embedded systems.